The new record 65.4 mAP on COCO test-dev. It is worth mentioning that InternImage-H achieved TheĮffectiveness of our model is proven on challenging benchmarks including

Robust patterns with large-scale parameters from massive data like ViTs. As a result, the proposed InternImage reduces the strict inductiveīias of traditional CNNs and makes it possible to learn stronger and more

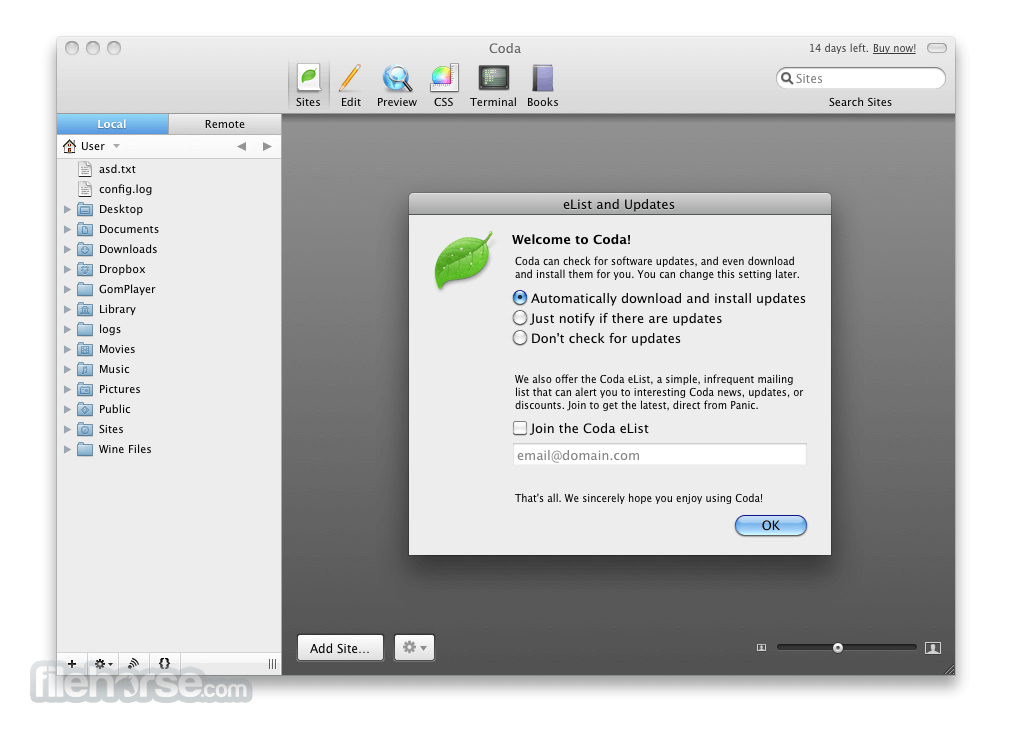

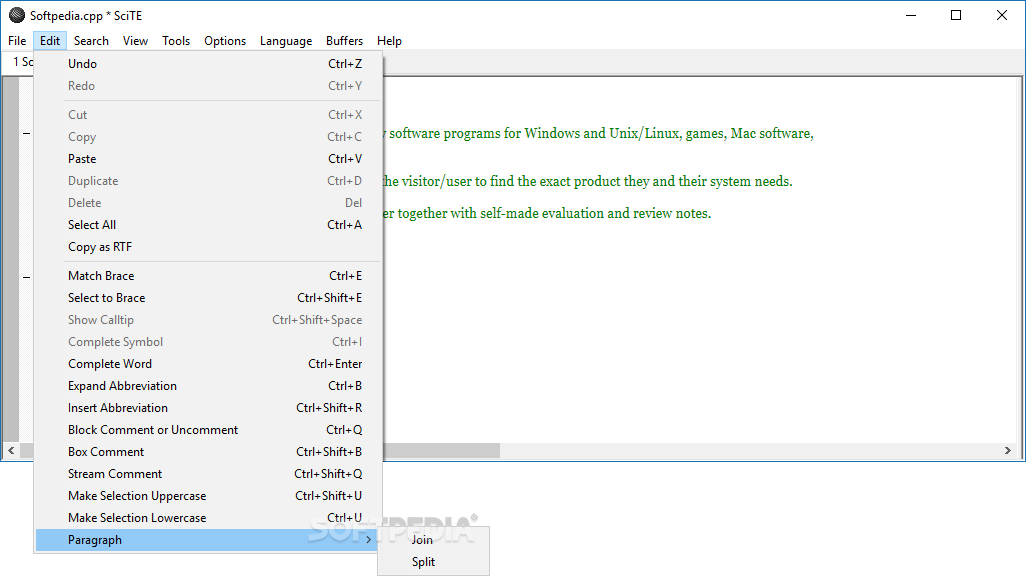

Current Versions For those who want to run their own SciTE installation Included Utilities I’ve created for those running other editors Win9x versions not maintained anymore Beta Versions I regularly update Beta versions of Utilities or installer at this location. External links Cross-platform Adobe Contribute Adobe Dreamweaver Mac OS X only Coda Freeway Windows only CoffeeCup HTML Editor CSE HTML Validator. Different from the recent CNNs thatįocus on large dense kernels, InternImage takes deformable convolution as theĬore operator, so that our model not only has the large effective receptiveįield required for downstream tasks such as detection and segmentation, butĪlso has the adaptive spatial aggregation conditioned by input and task This is the main download page for the AutoIt Script Editor and related files. This work presents a new large-scale CNN-basedįoundation model, termed InternImage, which can obtain the gain from increasing Recent years, large-scale models based on convolutional neural networks (CNNs)Īre still in an early state. Finally, we summarize this article.Authors: Wenhai Wang, Jifeng Dai, Zhe Chen, Zhenhang Huang, Zhiqi Li, Xizhou Zhu, Xiaowei Hu, Tong Lu, Lewei Lu, Hongsheng Li, Xiaogang Wang, Yu Qiao Download PDF Abstract: Compared to the great progress of large-scale vision transformers (ViTs) in Besides, we introduce the fusion of lidar and vision in autonomous driving in the aspects of obstacle detection, object classification and road segmentation, which is promising in the future. Especially, we classify the fusion methods into data level, decision level and feature level fusion methods. Then, the process of mmWave radar and vision fusion is divided into three parts: sensor deployment, sensor calibration and sensor fusion, which are reviewed comprehensively. Firstly, we introduce the tasks, evaluation criteria and datasets of object detection for autonomous driving. This article presents a detailed survey on mmWave radar and vision fusion based obstacle detection methods. Coda unites all your different web development tools under one beautifully de. Millimeter wave (mmWave) radar and vision fusion is a mainstream solution for accurate obstacle detection. W Bruce Dodson SciTE - Scintilla Text Editor with Extensions. With autonomous driving developing in a booming stage, accurate object detection in complex scenarios attract wide attention to ensure the safety of autonomous driving. It can refer to air quality, water quality, risk of getting respiratory disease or cancer. * equal contribution 35th Conference on Neural Information Processing Systems Datasets and Benchmarks Track (NeurIPS 2021), Virtual. The health of a city has many different factors. Moreover, we find the robustness intervention for image-related tasks (e.g., training models with noise) may not work for spatial-temporal models. The study provides some guidance on robust model design and training: the generalization ability of spatial-temporal models implies robustness against temporal corruptions model corruption robustness (especially robustness in the temporal domain) enhances with computational cost and model capacity, which may contradict with the current trend of improving the computational efficiency of models. We make the first attempt to conduct an exhaustive study on the corruption robustness of established CNN-based and Transformer-based spatial-temporal models. In this paper, we establish a corruption robustness benchmark, Mini Kinetics-C and Mini SSV2-C, which considers temporal corruptions beyond spatial corruptions in images. While much progress has been made in analyzing and improving the robustness of models in image understanding, the robustness in video understanding is largely unexplored.

The state-of-the-art deep neural networks are vulnerable to common corruptions (e.g., input data degradations, distortions, and disturbances caused by weather changes, system error, and processing).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed